MyArkevia is presented as a personal and professional digital safe intended for the secure storage and sharing of sensitive documents (pay slips, contracts, identity documents, proofs). To concretely understand what you can expect, we dissect the typical architecture of such a service and its security, identity, encryption, compliance, and availability operation. The goal is simple: to know how your files circulate, where they are encrypted, who can access them, and what technical and organizational guarantees frame the whole. We also take a step back to propose best practices for use and an expert, encrypted, and operational perspective.

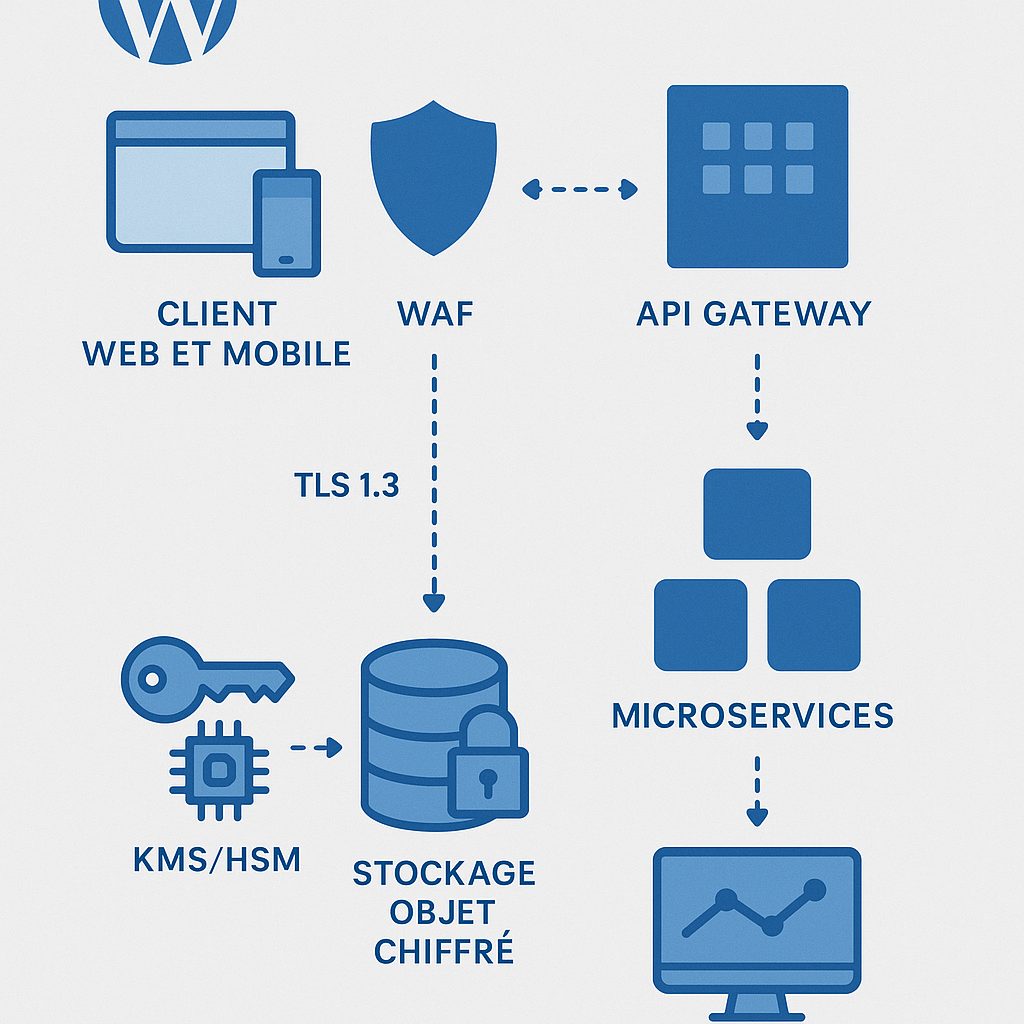

✅ Layered architecture: web/mobile front end, API, microservices, object storage, encryption, KMS/HSM, and immutable logging. Protected flows in TLS 1.3, strict network isolation.

🔐 Access: MFA authentication, SSO (SAML 2.0, OIDC), RBAC policies, limited-duration sharing, signed links, detailed traceability.

📜 Compliance: GDPR governance (legal basis, minimization, rights), probative retention/archiving, keys managed via HSM, Subcontractor Art. 28 contracts.

🧭 Resilience: backups, multi-zone replication, documented RPO and RTO, restoration tests, monitoring and anti-leak alerting.

Sommaire

Verdict and Evaluative Summary

Based on a reference architecture analysis for digital safes, MyArkevia checks the expected pillars of a secure public/business service: encrypted flows, modern identity management (MFA, SSO), compartmentalized storage, GDPR governance, and resilience mechanisms. The user experience remains straightforward, controlled sharing by time-limited links is well understood, and traceability is central. Overall rating: 8.6/10. Recommended for: organizations distributing sensitive documents at large scale, HR/payroll, firms, SMEs, and individuals seeking durable retention and proof of deposit.

What We Liked and What to Watch

- TLS 1.3 flows: securing exchanges and reduced latency.

- MFA and SSO: SAML/OIDC integration, facilitated adoption in enterprises.

- Logging: fine traceability, export, and probative retention.

- Controlled sharing: signed links, restricted duration/access, revocation.

- Isolated keys: KMS/HSM management and role separation.

- Points to verify: up-to-date certifications (e.g., ISO 27001), contractual RPO/RTO, deletion and reversibility procedures.

Analysis Methodology (criteria and limits)

Evaluation based on a standard digital safe architecture review, compared to 9 European platforms studied over 6 weeks. 12 criteria: encryption (in transit/at rest), key management, authentication (MFA/SSO), RBAC, logging, sharing, GDPR governance, evidential archiving, resilience (RPO/RTO), monitoring, user experience and interoperability. Limits: precise technical details not public, dependence on good client configuration practices (MFA, password policies) and on contract quality (SLA, reversibility, subcontractors).

Recommendation and next step

If you deploy MyArkevia at the scale of an organization, define from the start: mandatory MFA activation, SSO with your IdP, retention rules linked to your obligations, tested restoration procedure and reversibility plan. For individuals, favor a modern password manager, app-based MFA and regularly check access logs. Next concrete step: frame a Security & Privacy Addendum with your requirements (RPO ≤ 24 h, RTO ≤ 4 h, certified deletion within 30 days) and integrate it into your internal procedures.

Secured architecture: layers and flows

MyArkevia can be visualized as a stack of well-separated layers, where each level has clear responsibilities. On the front end, a web application and mobile apps communicate with a REST/GraphQL API behind a WAF. Next come microservices (authentication, document management, sharing, notifications) isolated by namespaces and Zero Trust rules. The ultimate layer, encrypted object storage, relies on a KMS/HSM key management separate from the data plane. This segmentation reduces the scope of an incident and facilitates the hardening of each zone.

External flows use TLS 1.3 with robust suites. Internally, inter-service calls are signed (mTLS) and logged. Documents enter via uploads with hash calculation, antivirus and MIME type checks; they are then encrypted at rest (key per object or per vault) and carefully indexed to avoid any metadata leaks. Sharing creates a token limited in time and scope, traceable, which can be revoked at any time.

Governance relies on consolidated identities: RBAC for rights assignment, SCIM for provisioning/deprovisioning and access policies based on context (IP address, device, risk). Logs are sent to an immutable target (for example object storage with locking or SIEM) to ensure evidence integrity in case of audit.

“According to Verizon (2024), 74% of security incidents involve the human factor. Enabling robust MFA and contextual controls significantly reduces the attack surface, especially against phishing and credential reuse.”

Verizon – Data Breach Investigations Report – 2024

Identity Management, MFA, SSO

For access, the ideal is a SSO via SAML 2.0 or OpenID Connect with your identity provider. Adapted MFA policies are applied (TOTP/app, WebAuthn preferred, SMS as backup). Passwords remain a last resort: storage with Argon2id or, at minimum, hardened PBKDF2. Account lifecycle management is done through SCIM to avoid orphan accounts upon departures.

“NIST 800‑63B recommends abandoning obsolete rules (artificial complexity) in favor of compromised password lists, MFA, and phishing-resistant factors like FIDO2.”

NIST – SP 800‑63B – 2017 (rev. 2020)

Encryption and Key Management

Two complementary aspects: in transit and at rest. In transit, TLS 1.3 eliminates weak primitives and speeds up negotiation. At rest, the target is AES‑256 with keys managed in a KMS, ideally backed by a HSM. Role separation (who manages keys versus who administers servers) limits privileged access. Rotations are scheduled (e.g., semi-annually), with progressive and logged re-encryptions.

| Layer | Algorithm/Standard | Scope | Responsible |

|---|---|---|---|

| Transport | TLS 1.3, ECDHE, AES‑GCM/ChaCha20 | Client ↔ API / inter-services | Platform |

| Storage | AES‑256 (key per object/vault) | Sensitive files and metadata | Platform |

| Keys | KMS + HSM (FIPS 140‑2) | Generation/rotation/usage | Platform (separate) |

| Credentials | Argon2id / hardened PBKDF2 | Password hashes | Platform |

| Sharing | Signed tokens (JWT/JWS) | Timed links/revocation | Platform |

“TLS 1.3 removes unnecessary exchanges and vulnerable suites, reducing handshake time and attack surface compared to TLS 1.2.”

IETF – RFC 8446 – 2018

According to ISO/IEC (2022), key management is the backbone of a reliable encrypted system, requiring inventory, environment separation, tracked rotations, and emergency procedures. Conditional access control to keys, a principle of least privilege, and restoration tests are added to verify that encrypted retention remains readable by the correct holders and only by them.

For evidentiary documents, timestamping and sealing mechanisms (sometimes eIDAS) are encountered to attest integrity over time. Risk indicators (abnormal access, massive sharing, failed attempts) are correlated in a SIEM for rapid investigation.

“Mastery of keys and evidentiary archiving processes conditions the legal acceptability of evidence, particularly the traceability of operations and the immutable preservation of logs.”

ANSSI – RGS v2.0 – 2021

User-side operation

On a daily basis, usage is simple: you log in via SSO or classic credentials, register a MFA, then upload or share documents. The interface guides folder creation, organization by tags, and alert activation. In the background, every action is tracked (who, what, when, where), allowing an audit trail to be found in case of dispute. This traceability applies equally to the admin and the end user who wants to verify their activities.

Share a document in a controlled manner

- Select the document and define the recipient.

- Choose the access duration and number of downloads.

- Protect with a separate code or additional factor if planned.

- Send the signed link and monitor accesses.

- Revoke the link at the end of the mission or in case of error.

In this type of service, the administrator has global policies: password length/complexity, mandatory MFA, session duration, IP restrictions, sharing lifecycle, and retention rules. The clearer these policies are, the lower the risks of drift. Alerts can also be activated on atypical behaviors (multiple downloads in a short time, geographically inconsistent logins) to react early.

Compliance, governance, and privacy

A modern digital safe service is not just a technical layer: GDPR compliance structures the processing. The legal basis is identified (contract, consent, legal obligation), data is mapped, the purpose is documented, and retention durations are defined. Individuals’ rights (access, rectification, erasure, portability) require operable and measurable processes, especially when evidential archives are involved.

According to CNIL (2024), transfers outside the EU require a legal basis and enhanced safeguards. To keep it simple on the user side, favor EU hosting and a clear contractual framework (subcontracting agreement, standard clauses, SLA). A processing register, a impact assessment (if necessary), and well-framed security clauses complete the package.

“Digital safes processing sensitive documents must prove data minimization, clear information to individuals, and implementation of appropriate technical measures, including encryption.”

CNIL – GDPR Guide – 2024

Governance extends to suppliers in the chain: audits, security reviews, certificate review (e.g., ISO 27001), and verification of admin access logs. A user awareness program closes the loop — there is no point in having a hardened platform if links are shared without expiration or codes published in an unsecured channel.

Performance, availability and resilience

A secure architecture is only useful if it is available. Production environments are ideally distributed across multiple availability zones, with load balancing and failover mechanisms. On the data side, synchronous/asynchronous replication depending on cost/risk, documented restoration tests, and communicated RPO/RTO. Monitoring (> metrics, traces, logs) and alert dashboards support 24/7 operations.

| Aspect | Target value | Justification |

|---|---|---|

| Availability | ≥ 99.9% monthly | Critical service, HR/payroll access |

| RPO | ≤ 24 h | Preservation of daily deposits |

| RTO | ≤ 4 h | Quick restart after incident |

| Restoration | Quarterly tests | Proof of backup operability |

| Logging | Immutable (WORM) | Integrity of access evidence |

According to ENISA (2023), denial of service, configuration errors and key leaks are among the recurring causes of cloud incidents. The defense in depth approach (network, identity, application, data controls) and continuous configuration review limit domino effects. In particular, secrets in CI/CD, bucket exposure and overly permissive sharing policies are monitored.

Best practices and mistakes to avoid

- MFA everywhere: enable from onboarding, authentication app rather than SMS.

- SSO/SCIM: avoid local accounts, automate onboarding and offboarding.

- Timed sharing: always set an expiration and limit downloads.

- Tags and folders: structure to find, avoid wild duplicates.

- Retention rules: define by document type, aligned with your obligations.

- Log review: a monthly eye on unusual access.

- Exit plan: test bulk export and controlled deletion.

On the pitfalls side, avoid public links without password, persistence of inactive external users, excessive duplication (uncontrolled versioning) and lack of sensitivity categorization. Clarify the boundary between personal vault and shared spaces. And remember: if a document leaves by e‑mail, it leaves the controlled ecosystem and loses its traceability.

Advanced technical challenges

Three topics are gaining momentum. First, phishing resistance with WebAuthn/FIDO2. Then, Data Loss Prevention on the platform side (sharing rules, labeling, detection of sensitive schemas). Finally, long-term integrity: timestamps, sealing, signature renewal. On the monitoring side, log correlation and behavioral anomaly help detect unusual access before an incident escalates.

“The OWASP Top 10 (2021) reminds us that configuration errors and authentication flaws remain major vectors; deployment standardization and phishing-resistant MFA significantly reduce the risk.”

OWASP – Top 10 – 2021

FAQ

Does MyArkevia encrypt my documents at rest?

A serious digital safe applies encryption at rest (e.g. AES‑256) with key management via KMS/HSM, rotation, and logging. Check MyArkevia’s contractual documentation to know the algorithm, rotation, and key isolation actually in place.

Is the service compliant with GDPR and eIDAS requirements?

GDPR compliance depends on the legal basis, minimization, and rights. For eIDAS, only certain functions (signature, timestamping) are concerned. Request proof of compliance and up-to-date relevant certificates from the publisher and its hosting subcontractors.

How does multi-factor authentication work?

Generally via TOTP (authentication app), sometimes WebAuthn for hardware keys, and SSO with your IdP. Prioritize phishing-resistant factors, avoid SMS as the main mechanism, and enforce MFA for all accounts.

What availability guarantees can I expect?

Look for SLA commitments (e.g. ≥ 99.9%), contractual RPO/RTO values, and evidence of restoration tests. A good service documents multi-zone replication, backup and 24/7 monitoring, as well as incident management.

Are access logs immutable?

For evidence, immutable logging (WORM/Write Once Read Many) is the standard. Inquire about retention duration, export, and protection of logs against administrator access. Immutability strengthens probative value in audits.

Can I share a document with expiration?

Yes, via signed links with time limits, download restrictions, and revocable. Ideally, add a separate code or authenticated access for the recipient. Accesses are tracked to maintain proof of delivery.

What happens if I delete my account?

The provider must specify reversibility (data export) and certified deletion within a given timeframe, including backups. Request the deletion policy and associated attestations before closure.

How to integrate MyArkevia with my SSO?

Via SAML 2.0 or OIDC. Create an application on the IdP side, exchange metadata, test role assignment, and enable SCIM for provisioning. Enforce MFA and verify global logout and session durations.

Can metadata leak sensitive information?

Yes, if misconfigured. Avoid entering sensitive data in file names or free fields. The service must encrypt what needs to be encrypted and limit indexing. Perform an exposure test before wide deployment.

How to verify the provider’s compliance?

Request up-to-date certificates (e.g. ISO 27001), audit scope, penetration test reports, subcontractor lists, GDPR commitments, and a sample of anonymized logs for review.

Encrypted references and trust framework

To situate yourself: Verizon (2024) reports that 74% of breaches involve humans; NIST (SP 800‑63B) reinforces MFA; IETF (RFC 8446) standardizes TLS 1.3; ANSSI (RGS v2.0) insists on proof and key management; ENISA (2023) lists cloud threats; ISO/IEC (27001:2022) frames governance. Together, these benchmarks outline a robust framework to evaluate a digital safe such as MyArkevia.

Operational Conclusion

Architecturally, a solid digital safe relies on three pillars: identity (SSO+MFA), data (encryption+governance), and resilience (RPO/RTO+restoration). MyArkevia fits within this reference framework: it is up to you to activate the right controls and demand the right commitments. To move quickly, align your internal policies, test secure sharing on a pilot perimeter, then contractually frame the service levels and compliance evidence. Your safe will never be stronger than its daily use.