| Key points | Details to remember |

|---|---|

| 🎮 Mode 7 (SNES) | Technique transforming 2D planes into pseudo-3D for racing and aerial combat |

| 🚀 Special cartridges | Super FX/SA-1 boosted performance for emerging 3D |

| 🎥 90s FMV | Fullscreen videos revolutionizing storytelling on Mega-CD and PS1 |

| 💥 3D acceleration | 3dfx Voodoo cards democratized polygonal rendering on PC |

| 🔊 Spatial audio | Dolby Atmos creates three-dimensional sound environments |

| 💡 Ray tracing | Physically realistic lights and reflections in real time (RTX/PS5) |

| ✋ DualSense haptics | Differentiated tactile feedback simulating textures and resistances |

The evolution of video games is a technological saga where each decade brings its share of silent revolutions. Behind our screens, engineering feats have transformed static pixels into living worlds, elevating the medium from simple entertainment to a total sensory experience. A look back at eight quantum leaps that redefined what a game could be – from the algorithmic cleverness of Mode 7 to the photonic calculations of modern ray tracing.

Sommaire

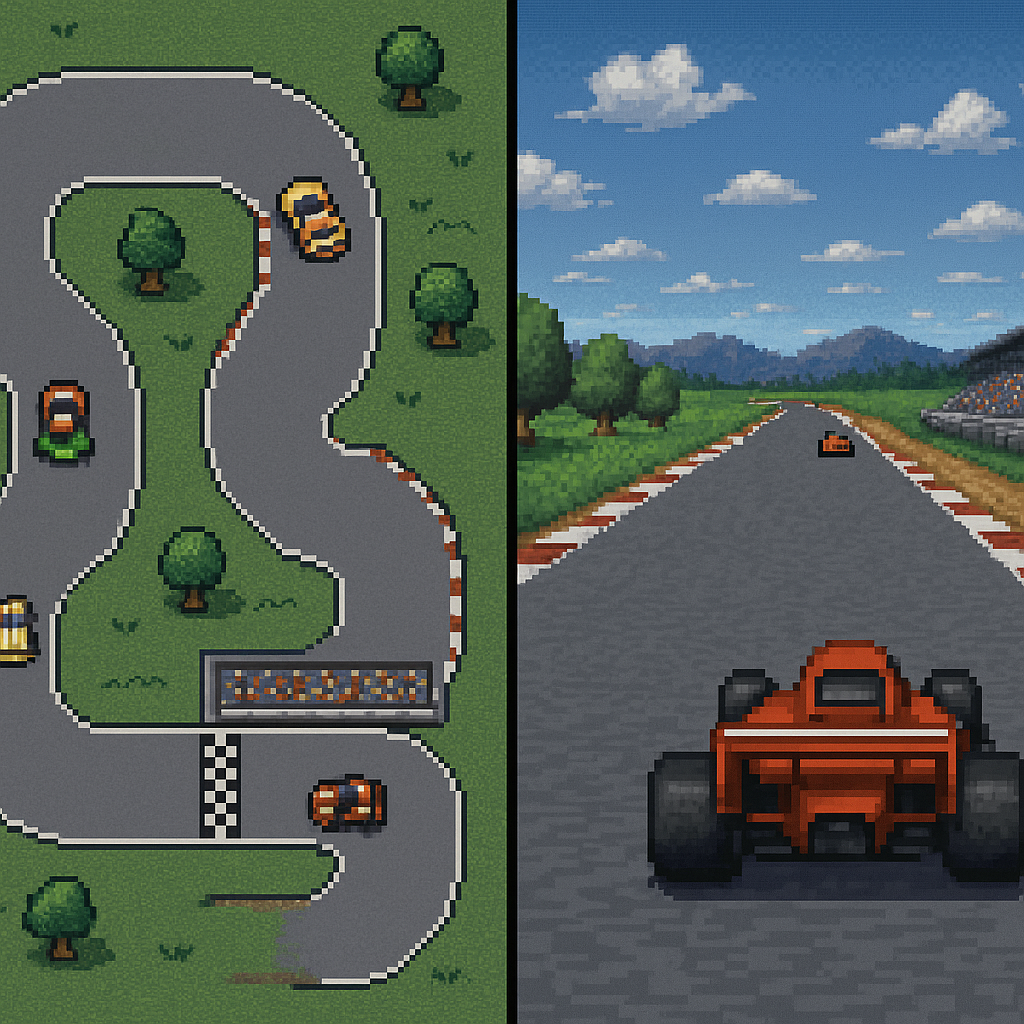

Mode 7 & rotation/scaling effects (SNES)

The Super Nintendo achieved the impossible: creating the illusion of 3D without a dedicated processor. Its secret? Mode 7, a graphics mode allowing real-time deformation, rotation, and zooming of 2D layers. By applying matrix transformations to a flat surface, developers simulated dizzying depths in F-Zero or dynamic terrains in Super Mario Kart. Unlike rival systems that used pre-rendered sprites, this approach offered unprecedented flexibility: every Pilotwings race became unique thanks to procedural landscape generation. This mathematical trick laid the foundation for modern racing engines – where Sega used line-scrolling techniques for After Burner, Nintendo opted for a more elegant and memory-efficient solution.

Special cartridges (Super FX, SA-1)

Faced with hardware limitations, cartridge manufacturers directly integrated coprocessors. The Super FX (a RISC chip designed by Argonaut Games) boosted the SNES by an additional 10 MHz, multiplying its polygonal calculation capacity. Star Fox was the showcase: wireframe ships moving through alien tunnels at 20 fps, an unthinkable feat on the console alone. Later, the SA-1 increased the clock frequency to 10.74 MHz and added cache memory, allowing Super Mario RPG to display smooth rotations and dynamic lighting. These custom solutions anticipated the era of dedicated GPUs – each game literally became its own technological platform.

Full-Motion Video (Mega-CD, PS1)

The advent of the CD-ROM freed developers from the constraints of cartridges, offering up to 650 MB of storage compared to a maximum of 4 MB previously. This windfall allowed the explosion of 90s FMV games: full-screen video sequences that transformed the narrative experience. On Mega-CD, Night Trap and Sewer Shark used real actors to create interactive movies, while the PlayStation revolutionized with studio-quality cinematics in Final Fantasy VII. The optical medium also enabled orchestral soundtracks (Xenogears) and full voice acting (Metal Gear Solid). Although M-JPEG compression sometimes generated artifacts, it offered a cinematic immersion that marked an entire generation.

3D PC Acceleration (3dfx → RTX)

Before 1996, 3D PC games looked like mazes of blurry pixels. The arrival of 3dfx Voodoo graphics cards changed the game: equipped with 4 MB of SGRAM and the Glide API, they rendered Tomb Raider or Quake with sharp textures and bilinear filtering. Their secret? Delegating rendering to hardware via rasterization, calculating each triangle before display. This architecture evolved into the programmable pipelines of the GeForce, then the RTX cores dedicated to real-time ray tracing. Nvidia dominated this race by integrating tensors for DLSS – an AI upscaling technology compensating for the cost of ray tracing.

HD & Blu-ray (PS3)

The PlayStation 3 was a bold technological bet with its integrated Blu-ray: 25 GB per disc compared to 8.5 GB on dual-layer DVD. This capacity allowed studios to include uncompressed textures (The Last of Us), 1080p cinematics (Uncharted 3), and open worlds without loading screens (GTA V). Combined with the Cell Broadband Engine, the physical medium transformed HD into a standard, forcing Xbox 360 to release an external drive. Blu-ray also became a cultural Trojan horse: Sony won the format war against HD-DVD, proving that a console could influence the entire entertainment industry.

Spatial Audio & Atmos

Sound immersion has undergone three revolutions: stereo (NES), 5.1 surround (Xbox), then Dolby Atmos gaming. This standard uses metadata to dynamically position sounds in a 3D sphere, utilizing up to 34 speakers. In Hellblade II, schizophrenic voices literally whisper behind the player, while Returnal simulates alien corridor echoes with biometric precision. Unlike older solutions based on predefined channels, Atmos adapts mixing to your setup – even on headphones via binaural. A flexibility that renders the static positioning of the sound chip of the 90s obsolete.

Real-time ray tracing (RTX, PS5)

For decades, games simulated light through tricks: precalculated lightmaps, cube maps for reflections, or screenspace reflections. Ray tracing changes the game by modeling the physical path of each photon. Result: natural cast shadows, caustics in water (Cyberpunk 2077), and reflections that capture the off-screen environment (Control). This demanding technology relies on dedicated RTX cores at Nvidia, and the DirectX Raytracing API on PC. The PS5 and Xbox Series integrate it via their RDNA 2, but on a reduced scale – Spider-Man Miles Morales only activates it in puddle reflections. The current challenge? Optimizing the cost through GDDR6X video memory and upscalers like FSR.

Advanced haptic feedback (DualSense)

The PS5 controller goes beyond simple vibration: its linear actuators produce differentiated micro-impulses. In Astro’s Playroom, you feel the crunch of sand under the robot’s feet, then the sticky resistance of modeling clay. The adaptive triggers add a tactile layer: drawing a bow in Horizon Forbidden West requires increasing pressure, simulating physical tension. This DualSense haptics creates a sensory dialogue between the game and the hands, where the Switch’s HD Rumble was limited to basic effects. A discreet but profound revolution – the first since the vibration motors of the 90s.

Conclusion: towards digital synapses?

These technological leaps outline a clear trajectory: bridging the gap between the real and the virtual. Tomorrow, cloud gaming will solve hardware limitations, machine learning will generate autonomous NPCs, and neural interfaces could replace controllers. But the real revolution will be invisible: algorithms so natural they will erase the boundary between technology and imagination. Like Mode 7 in its time, the future will belong to perfect illusions.

FAQ: Key video game technologies

- Did Mode 7 consume a lot of resources?

Yes, it monopolized almost all the SNES’s power – only 64 sprites could be displayed simultaneously in Mode 7 compared to 128 normally. - Why did FMV decline?

The arrival of real-time 3D made pre-rendered videos obsolete, too rigid and storage-hungry. In-game cinematics offered more interactivity. - Is ray tracing indispensable?

No, but it radically reduces artists’ work. Without it, creating realistic reflections requires manual placement of reflection probes in each level. - Does DualSense haptics work on PC?

Yes, but partially: developers must implement the Steam Input API to support adaptive triggers and advanced haptic feedback.